Initial Summary

Artificial intelligence has moved from theoretical curiosity to practical research tool faster than most academic infrastructure has adapted to accommodate it. While universities debate AI policy and journal editors write position statements, researchers face a more pragmatic question: which AI tools actually accelerate research work without compromising quality, and which are solutions looking for problems? This guide examines the specific, tested applications where AI genuinely reduces friction in the research workflow, explains the constraints that determine when these tools work well versus poorly, and identifies the professional contexts where AI adoption creates more problems than it solves.

What AI Tools Can and Cannot Do in Research

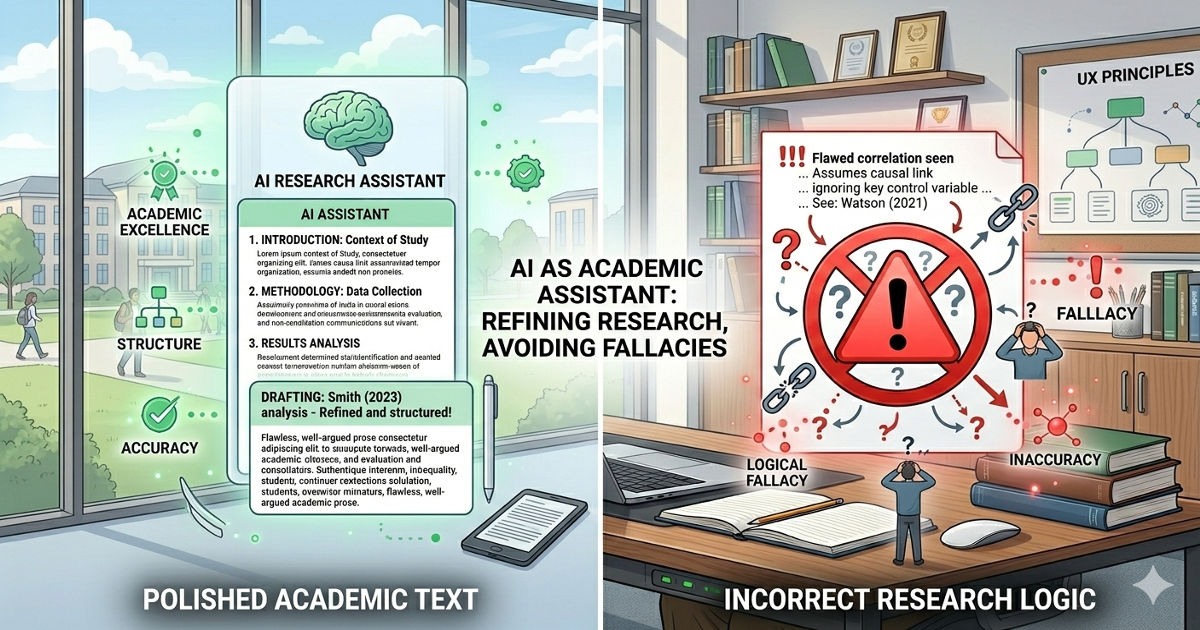

Before examining specific applications, it's necessary to establish what AI language models like GPT-4, Claude, and their specialized variants are actually capable of in a research context. Most misapplications of AI stem from a category error: treating AI as a reasoning engine when it functions as a pattern-matching and generation system.

AI tools excel at tasks requiring pattern recognition, synthesis of existing information, transformation of format or style, and rapid generation of structured text. They perform poorly at tasks requiring genuine novel reasoning, verification of factual claims against ground truth, or maintaining consistency across long logical chains.

Key Insight:

AI tools are most useful when you need something transformed, reorganized, or drafted quickly, and least useful when you need something verified, proven, or created with novel intellectual content. The most successful AI users in academia treat these tools as sophisticated research assistants who can draft, format, and synthesize, not as autonomous researchers who can think.

Use Case 1: Literature Review Synthesis

The Problem AI Solves

Reading 50+ papers to understand the current state of a research question is necessary work, but synthesizing those papers into a coherent literature review that identifies gaps, conflicts, and trends is time-intensive mechanical work. A researcher typically reads each paper, takes notes, then manually sorts and synthesizes those notes into themes.

How AI Helps (Correctly)

After reading the papers yourself, you provide AI with your notes or key excerpts and ask it to identify common themes, contradictions, methodological trends, or gaps. The AI produces a first draft synthesis that groups related findings, highlights conflicts between studies, and suggests where the literature is sparse.

Example prompt: "Here are my notes from 30 papers on language acquisition in bilingual children. Identify the 5 major theoretical frameworks mentioned, list which studies support each framework, and note any direct contradictions between studies."

Where This Fails

This approach fails when researchers skip reading the papers and ask AI to "review the literature" based only on paper titles or abstracts. AI cannot assess methodological quality, spot subtle errors in interpretation, or understand which citations are seminal versus peripheral. The synthesis it produces will be structurally coherent but intellectually shallow.

Implementation rule: Use AI to synthesize and organize your understanding of papers you've already read, not to replace reading them.

Use Case 2: Grant Proposal Drafting and Editing

The Problem AI Solves

Grant proposals require translating technical research into language appropriate for reviewers who may not be specialists, fitting extensive ideas into strict word counts, and adhering to specific structural requirements that vary by funding agency. Much of this work is formatting and translation rather than original thinking.

How AI Helps (Correctly)

You provide AI with your technical research plan and ask it to rewrite sections for a non-specialist audience, tighten prose to meet word counts, or restructure sections to match a funding agency's required format. You then review and refine the output to ensure technical accuracy and strategic positioning.

Example prompt: "Rewrite this methods section for a National Science Foundation reviewer who is a biologist but not a specialist in proteomics. The current version is 800 words; it must be reduced to 500 words without losing key methodological details."

Where This Fails

This approach fails when researchers ask AI to generate the research significance section from scratch. AI cannot know why your specific research question matters to your field, which methodological innovations are genuinely novel, or which broader impacts are credible given your institutional resources. These sections require strategic thinking that AI cannot perform.

Implementation rule: Use AI for translation (technical to accessible), compression (long to short), and formatting (your structure to their requirements), not for strategic positioning or significance arguments.

Use Case 3: Teaching Material Development

The Problem AI Solves

Creating problem sets, quiz questions, lecture examples, and assignment prompts requires substantial time but follows predictable patterns. Most teaching materials require variations on established formats rather than genuinely novel pedagogical approaches.

How AI Helps (Correctly)

You provide AI with learning objectives and ask it to generate multiple-choice questions, problem set variations, or example scenarios that test specific concepts. You review the output to ensure questions are at the right difficulty level and that correct answers are actually correct.

Example prompt: "Generate 10 multiple-choice questions for an undergraduate statistics course covering hypothesis testing. Each question should require students to interpret p-values in a real-world scenario, not just calculate them. Include 4 answer options per question with explanations for why each is correct or incorrect."

Where This Fails

This approach fails when AI-generated questions are used without verification. AI frequently generates mathematically incorrect problems, produces answer options where multiple answers are technically correct, or creates scenarios with unstated assumptions that make questions ambiguous. These errors are obvious to experts but confusing to students.

Use Case 3: Teaching Material Development

The Problem AI Solves

Creating problem sets, quiz questions, lecture examples, and assignment prompts requires substantial time but follows predictable patterns. Most teaching materials require variations on established formats rather than genuinely novel pedagogical approaches.

How AI Helps (Correctly)

You provide AI with learning objectives and ask it to generate multiple-choice questions, problem set variations, or example scenarios that test specific concepts. You review the output to ensure questions are at the right difficulty level and that correct answers are actually correct.

Example prompt: "Generate 10 multiple-choice questions for an undergraduate statistics course covering hypothesis testing. Each question should require students to interpret p-values in a real-world scenario, not just calculate them. Include 4 answer options per question with explanations for why each is correct or incorrect."

Where This Fails

This approach fails when AI-generated questions are used without verification. AI frequently generates mathematically incorrect problems, produces answer options where multiple answers are technically correct, or creates scenarios with unstated assumptions that make questions ambiguous. These errors are obvious to experts but confusing to students.

Implementation rule: Use AI to generate draft teaching materials, then verify every detail before distributing to students. In STEM fields especially, always check mathematical correctness.

Use Case 4: Code Documentation and Commenting

The Problem AI Solves

Research code written during active development often lacks documentation, meaningful variable names, or comments explaining logic. Returning to undocumented code months later, or sharing it with collaborators, requires significant effort to reconstruct what each function does and why.

How AI Helps (Correctly)

You provide AI with blocks of working code and ask it to add docstrings, comments, and variable name suggestions. AI can also generate README files explaining what a script does, what inputs it expects, and what outputs it produces.

Example prompt: "Add docstrings to these five Python functions that explain their purpose, parameters, and return values. Also suggest more descriptive variable names where current names are single letters or abbreviations."

Where This Fails

This approach fails when AI is asked to explain complex algorithmic logic without sufficient context. AI will produce plausible-sounding explanations even when the code's actual behaviour differs from its description. For complex algorithms, AI-generated documentation must be verified against the code's actual operation.

Implementation rule: Use AI to draft documentation for straightforward functions and data processing pipelines. For complex algorithms, verify AI explanations by testing whether the description matches the code's actual behaviour.

Use Case 5: Email and Administrative Correspondence

The Problem AI Solves

Academic work involves substantial administrative correspondence: responding to student questions, coordinating with collaborators, communicating with journal editors, and drafting formal letters. Much of this follows standard professional formats and could be templated if anyone had time to maintain templates.

How AI Helps (Correctly)

You provide AI with bullet points of what needs to be communicated and ask it to draft an appropriately formal email, letter, or response. This is particularly useful for declining invitations, requesting deadline extensions, or responding to routine student questions where the content is standard but the tone must be professional.

Example prompt: "Draft a polite email declining a journal editor role invitation. Key points: I appreciate the invitation, my current commitments don't allow me to provide the attention this role deserves, I hope they'll consider me for future opportunities. Keep it under 150 words."

Where This Fails

This approach fails when the correspondence requires specific factual details that AI cannot know (meeting times, course policies, names of collaborators) or when the emotional tone must be carefully calibrated (sensitive student situations, conflict resolution, complaints). AI-drafted email for sensitive contexts often sounds either too formal or inappropriately casual.

Implementation rule: Use AI for routine professional correspondence where tone is more important than specific details. Don't use AI for sensitive communications or situations where a mistake could damage relationships.

Key Insight: The AI applications that save academics the most time are not the intellectually impressive ones, they're the mundane ones. AI won't write your novel research contribution, but it will convert your bullet points into a properly formatted decline email, restructure your grant proposal to fit new word limits, or generate ten variations of quiz questions. The cumulative time saved on administrative friction compounds far more than occasional help with complex intellectual tasks.

Use Case 6: Data Analysis Code Generation

The Problem AI Solves

Writing code for standard statistical analyses, data cleaning operations, or visualization tasks requires remembering exact syntax, library functions, and parameter names. This is particularly time-consuming when switching between programming languages or when using libraries infrequently.

How AI Helps (Correctly)

You describe the analysis you want to perform in plain language, and AI generates code that implements it. This is particularly useful for standard operations: reading data files, cleaning missing values, running t-tests or ANOVA, creating common plot types, or reshaping dataframes.

Example prompt: "Write Python code using pandas to read a CSV file, remove rows where the 'age' column is null, create a new column called 'age_group' that categorizes age into bins (0-18, 19-35, 36-60, 60+), and then calculate the mean 'income' for each age group."

Where This Fails

This approach fails for complex custom analyses, statistical methods not widely implemented in standard libraries, or when subtle choices in methodology affect results (handling of edge cases, choice of distance metrics, treatment of outliers). AI will generate code that runs without error but makes methodological choices you may not want.

Implementation rule: Use AI to generate code for standard, well-documented analyses. Always review the code to ensure the statistical approach matches your methodological requirements, not just that it runs without error.

Wondering how to make your website AI-SEO optimized?

Explore how we use AI in our day to day work and see how we can work on your website to make it AI-SEO optimized.

→ Let’s connect and work on it together!

Use Case 7: Research Paper Editing for Clarity

The Problem AI Solves

Academic writing often suffers from unnecessarily complex sentence structure, passive voice, and jargon that makes papers harder to read than they need to be. Simplifying prose without changing meaning requires careful attention that's difficult to apply to one's own writing.

How AI Helps (Correctly)

You provide AI with a paragraph or section of your paper and ask it to simplify the language, convert passive voice to active voice, or improve clarity. You then review the suggestions to ensure the simplified version still conveys the precise meaning you intended.

Example prompt: "Simplify this paragraph from my introduction. Convert passive voice to active voice where possible, reduce average sentence length, and replace jargon with clearer alternatives. Keep the technical accuracy intact."

Where This Fails

This approach fails when AI oversimplifies technical content, losing precision in favor of accessibility. Scientific writing often requires specific terminology because common language doesn't have sufficiently precise equivalents. AI will sometimes replace technical terms with casual approximations that change meaning.

Implementation rule: Use AI to suggest clarity improvements, but verify that simplified language preserves technical precision. Don't accept suggestions that trade accuracy for readability.

Use Case 8: Conference Abstract and Bio Writing

The Problem AI Solves

Conference abstracts and speaker biographies require distilling complex research into 150-300 words that are both accessible and compelling. Most researchers find this type of writing awkward because it requires selling work in a way that feels promotional.

How AI Helps (Correctly)

You provide AI with your research paper or CV and ask it to draft an abstract or bio that fits the conference requirements. AI produces a first draft that emphasizes accessibility and impact, which you then edit for accuracy and tone.

Example prompt: "Using my attached CV, write a 150-word speaker biography for a medical conference aimed at clinicians. Emphasize my translational research and clinical collaborations rather than pure basic science. Use third person."

Where This Fails

This approach fails when the AI draft overstates findings ("groundbreaking" research, "revolutionary" methods) or misrepresents the work's stage (describing preliminary findings as definitive results). Conference organizers and reviewers can identify AI-generated promotional language, and it damages credibility.

Implementation rule: Use AI to draft conference materials, but edit ruthlessly to remove promotional language and ensure all claims are supportable.

Key Insight: The line between using AI as a tool and using AI as a crutch comes down to verification effort. If you spend more time verifying AI output than you would have spent doing the task properly yourself, you're using AI wrong. The tool should reduce total time, not just shift it from creation to verification.

Use Case 9: Brainstorming Research Questions

The Problem AI Solves

Developing research questions requires understanding what has been done, what remains unknown, and what is technically feasible. The brainstorming phase often feels unproductive because most ideas either duplicate existing work or prove impractical.

How AI Helps (Correctly)

You provide AI with a description of your research area and current knowledge gaps, and ask it to suggest 10-20 potential research questions. Most suggestions will be either obvious or unworkable, but the exercise often surfaces one or two angles you hadn't considered, which you then evaluate properly.

Example prompt: "I study memory consolidation in sleep. Recent work has shown that slow-wave sleep correlates with memory improvement, but the mechanism isn't clear. Generate 15 research questions that could help identify the mechanism. Consider molecular, cellular, and systems-level approaches."

Where This Fails

This approach fails if you treat AI-suggested research questions as vetted or feasible. AI has no understanding of what is technically possible with current methods, what has been tried but unpublished, or which questions are considered important versus trivial in your specific field. Every AI-suggested question requires the same scrutiny you'd apply to any research idea.

Implementation rule: Use AI brainstorming to surface ideas you might not have considered, but evaluate every suggestion with the same rigor you'd apply to ideas from any other source.

Use Case 10: Lecture Slide Content Generation

The Problem AI Solves

Creating lecture slides requires converting detailed knowledge into concise bullet points, selecting appropriate examples, and structuring content for verbal delivery. This transformation from expert knowledge to teaching material is time-intensive.

How AI Helps (Correctly)

You provide AI with learning objectives or textbook sections and ask it to generate bullet-point content for slides, suggest examples that illustrate concepts, or create comparison tables. You then refine the content to match your teaching style and add discipline-specific nuance.

Example prompt: "Create bullet-point slide content for a 50-minute lecture on Bayesian vs. Frequentist statistics for undergraduates with no prior statistics background. Include 3 concrete examples that illustrate when each approach would be used. Aim for 10-12 slides worth of content."

Where This Fails

This approach fails when AI-generated content is used without checking examples for correctness or appropriateness. AI frequently invents examples that are either factually wrong, culturally inappropriate, or inadvertently biased. Every example requires verification before presenting to students.

Implementation rule: Use AI to generate first-draft lecture content, but verify every example, check all facts, and ensure the presentation matches your pedagogical approach.

When Not to Use AI: Clear Boundaries for Research Integrity

Having established useful applications, it's equally important to identify contexts where AI use is either prohibited by academic standards or produces outputs that are professionally unacceptable regardless of technical capability.

1. Never Use AI for Peer Review

Peer review requires expert judgment about methodological soundness, significance to the field, and appropriate interpretation of results. AI cannot provide this judgment and using AI to draft reviews violates the confidentiality agreement implicit in peer review. If you're too busy to review a paper properly, decline the review request.

2. Never Use AI to Generate Research Results or Data

This should be obvious, but the temptation exists: AI cannot generate experimental data, statistical results, or research findings. Any output AI produces in response to "generate data for X" is fabricated. Using such output constitutes research fraud.

3. Don't Use AI for Initial Student Assessment

Using AI to grade student assignments or provide initial feedback on student work is problematic because AI cannot assess reasoning quality, only surface features of text. Students receive feedback on how their writing sounds, not on whether their thinking is correct. If grading requires expertise, AI cannot provide it.

4. Don't Use AI for Citation Verification

AI frequently invents citations that look correct but don't exist: real author names paired with plausible-sounding titles and nonexistent publication venues. Every citation AI provides must be verified in the actual literature. Using AI to check whether citations are correct is particularly dangerous because it will confidently confirm fabricated citations.

5. Don't Use AI for Institutional Decision-Making

Using AI to make or inform decisions about hiring, tenure, promotion, or graduate admissions is inappropriate because AI cannot assess the context-specific factors that determine whether someone is a good fit for a position or program. These decisions require human judgment about complex, often unstated criteria.

Which AI Tools to Use: A Practical Comparison

The landscape of AI tools changes frequently, but as of early 2025, several platforms are particularly relevant for academic use:

ChatGPT (OpenAI)

Best for: General-purpose text generation, code generation, and conversational interaction.

Limitations: Cannot access external sources reliably, frequently invents citations, and has no built-in fact-checking.

Claude (Anthropic)

Best for: Longer documents, analysis of uploaded files, and tasks requiring careful instruction-following.

Limitations: Similar citation issues as ChatGPT, though generally more cautious about making claims.

Consensus and Elicit

Best for: Searching academic literature with AI assistance. These tools search actual databases and return real papers.

Limitations: Still requires verification, but significantly more reliable than general AI for literature search.

GitHub Copilot

Best for: Code generation and completion within development environments.

Limitations: Generates code based on patterns in training data, which may include inefficient or incorrect approaches.

Navigating Institutional AI Policies

Most universities are developing AI use policies for research and teaching, but implementation varies significantly. Here's what you need to know:

Disclosure Requirements

Many journals now require disclosure of AI use in manuscript preparation. This typically means including a statement in the methods or acknowledgments section describing which AI tools were used and for what purposes. These requirements are still evolving, so check current journal guidelines.

Student Use Policies

If you allow (or prohibit) AI use in your courses, communicate this explicitly in your syllabus. Ambiguity causes problems: students assume AI is either always acceptable or never acceptable, and both assumptions lead to policy violations. Specify which uses are permitted (brainstorming, editing) and which aren't (writing assignment answers).

Data Privacy Considerations

Free versions of AI tools may use your inputs for training. Don't upload unpublished research, student data, confidential manuscript reviews, or proprietary information to AI platforms unless you're using a version with explicit data protection guarantees.

Building an Effective AI Workflow

The researchers who benefit most from AI tools are those who integrate them into specific parts of their workflow rather than using them opportunistically. Here's how to build a sustainable AI-assisted research process:

Step 1: Identify Bottlenecks

Track your time for one week and identify which tasks are: (a) necessary for research quality, (b) time-consuming, and (c) involve transformation or synthesis rather than novel reasoning. These are candidates for AI assistance.

Step 2: Develop Specific Prompts

Rather than using AI ad-hoc, develop a library of prompts for recurring tasks. Save prompts that work well, refine them over time, and share them with lab members. Good prompts include: desired output format, specific constraints, and examples of acceptable outputs.

Step 3: Build Verification Steps

For each AI-assisted task, create a checklist of what must be verified before the output is used. For code: does it run, does it produce expected outputs on test data, is the algorithm correct? For writing: is the content factually accurate, is the tone appropriate, are citations real?

Step 4: Measure Time Savings

Track whether AI tools actually save time once verification is included. Some tasks that appear to benefit from AI (literature review, statistical analysis) may not save time once proper verification is factored in. Focus your AI use on tasks where net time savings are real.

The Core Principle: AI as Tool, Not Replacement

The most successful academic users of AI treat these tools as sophisticated assistants who can draft, reorganize, and suggest, but cannot think, verify, or judge. When AI output looks correct, that's the beginning of evaluation, not the end. The work of verifying, refining, and taking responsibility for accuracy remains entirely human.

Researchers who expect AI to replace expert judgment consistently produce work that is surface-level plausible but substantively flawed. Researchers who use AI to accelerate mechanical tasks while maintaining full responsibility for intellectual content find that AI genuinely improves productivity without compromising quality.

Need help with your academic website?

We help researchers and universities develop websites that accelerate work without compromising academic integrity. From literature review automation to grant proposal optimization, we check with every AI tool that respects the boundaries of scholarly practice.

-> Get a free audit for your website

Frequently Asked Questions

Do I need to cite AI tools when I use them?

Journal requirements vary, but the emerging standard is to disclose AI use in an acknowledgments or methods section rather than citing the tool as a source. For example: "AI language models (ChatGPT-4, OpenAI) were used to edit and improve clarity of the manuscript text. All content was verified by the authors." Check specific journal guidelines.

Can AI tools access my university's library databases?

General AI tools like ChatGPT and Claude cannot access subscription databases directly. They can work with documents you upload, but they cannot search your library's licensed content. Specialized tools like Consensus and Elicit can search some academic databases, but coverage varies.

How do I know if a student used AI on an assignment?

AI detection tools have high false-positive rates and should not be relied upon for academic integrity decisions. Instead, design assignments that are difficult to complete with AI alone: require specific evidence from course materials, include reflection on the writing process, or use in-class components. Focus on pedagogical design rather than detection technology.

Is it ethical to use AI for research that could lead to publications?

Yes, with appropriate disclosure and verification. Using AI to draft portions of a manuscript, generate code, or synthesize literature is acceptable if you take full responsibility for the accuracy and integrity of the final output. AI is a tool, like statistical software or literature management systems, not a co-author. The key ethical requirement is that you verify all AI-generated content and disclose the AI's role.

Should I teach with AI or teach against it?

False dichotomy. Teach students to use AI as a tool for appropriate tasks (brainstorming, editing, code generation) while being explicit about tasks where AI cannot replace human expertise (critical analysis, synthesis of evidence, methodological design). The goal is not to prohibit AI use but to develop students' judgment about when and how to use it responsibly.

.gif)